Serverless AI News Engine

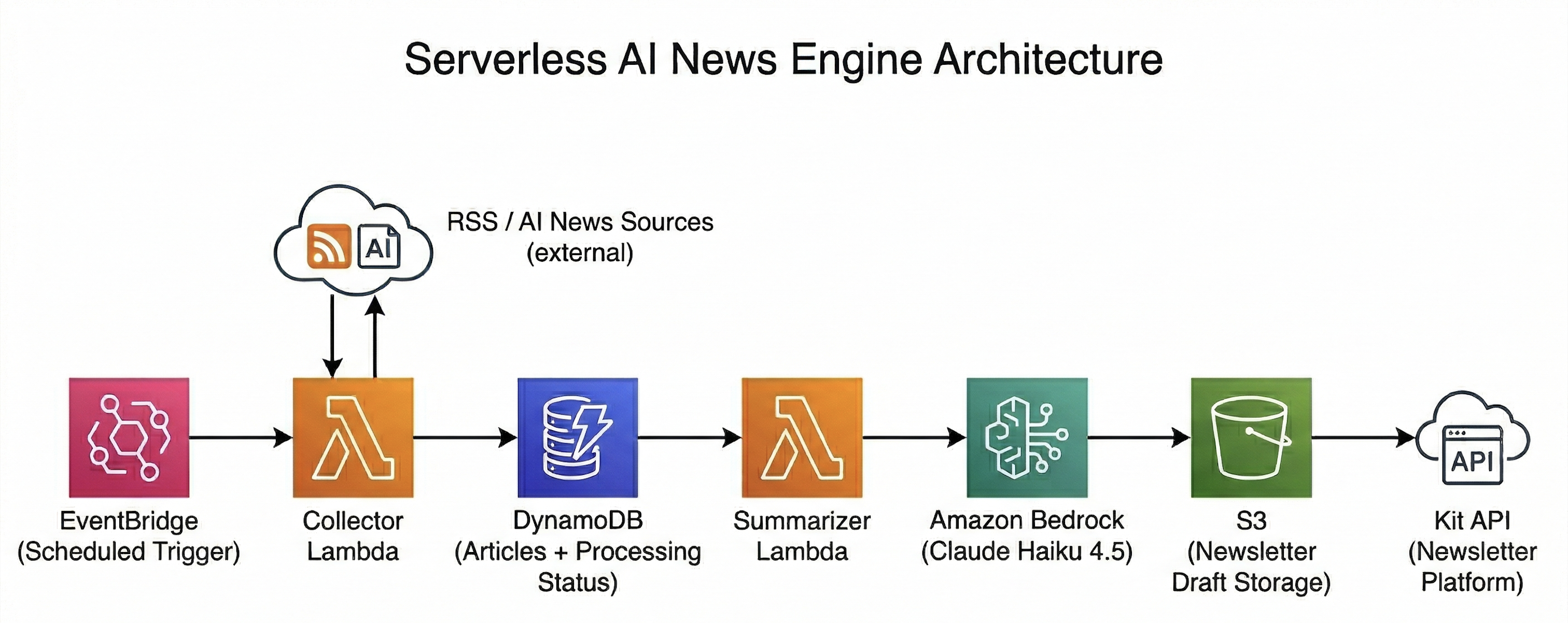

A serverless AWS pipeline that collects, processes, and summarizes AI news into a structured newsletter using Amazon Bedrock.

Project Links

Overview

This project was built to automate the process of collecting AI news, filtering what matters, and generating a newsletter draft with minimal manual effort. The system runs on a scheduled, event-driven architecture using AWS services and produces a ready-to-review HTML output stored in S3 before delivery.

Architecture

How it works

- Amazon EventBridge schedules the pipeline to trigger collection and processing at defined intervals.

- Collector Lambda fetches articles from RSS feeds and extracts metadata such as title, description, source, and URL.

- Articles are stored in DynamoDB with

processed = false, using conditional writes for idempotency and TTL for automatic cleanup. - Summarizer Lambda retrieves unprocessed articles from DynamoDB for further processing.

- Amazon Bedrock (Claude Haiku 4.5) processes the articles in batch to select key stories and generate summaries.

- A full HTML newsletter is generated from the structured output.

- The generated newsletter is stored in Amazon S3 to enable preview and validation of the output.

- The newsletter is sent to Kit via API as a draft broadcast using securely managed credentials.

- Articles are marked as processed in DynamoDB to prevent reprocessing in subsequent runs.

Key decisions

- Separated collection and processing into independent Lambda functions for better control and testability.

- Used DynamoDB for state tracking and idempotent ingestion.

- Processed articles in batches to improve context and efficiency of LLM output.

- Stored generated output in S3 to preview results independently of the delivery platform.

- Designed the system to remain flexible and platform-agnostic for different newsletter providers.